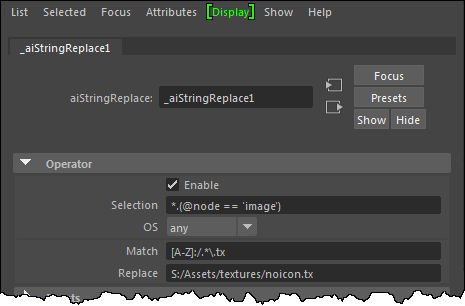

Arnold 6.0.2 includes a new string_replace that lets you do find and replace on string parameters.

For example, I got an ass procedural file from a user, but I didn’t get the textures. Normally I would just enable ignore textures, but this time I used string_replace to replace all textures with my trusty-old-Softimage noicon pic.

- Selection selects all Arnold image nodes. So this string replace operation applies to all string parameters of the image node (which includes name, uvset, color_space as well as filename).

- Match is a regular expression that matches anything that looks like C:/project/sourceimages/example.tx or X:/temp/test/example.tx

- [A-Z] matches any drive letter from A to Z

- .* matches any string of characters

- Note that match is a regular expression, so you cannot use glob wildcards like this: C:\sourceimages\*.tx (because * is not any string of characters, it is zero or more occurrences of the previous character)

- \.tx matches a period followed by “tx”. The period has to be escaped with a backslash because ‘.’ matches any single character

- Note that this match expression won’t handle something like example.txtra.tx

- Replace is the string that replaces anything matched by the Match expression.